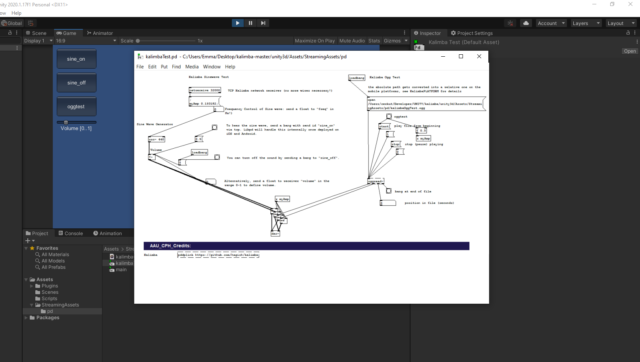

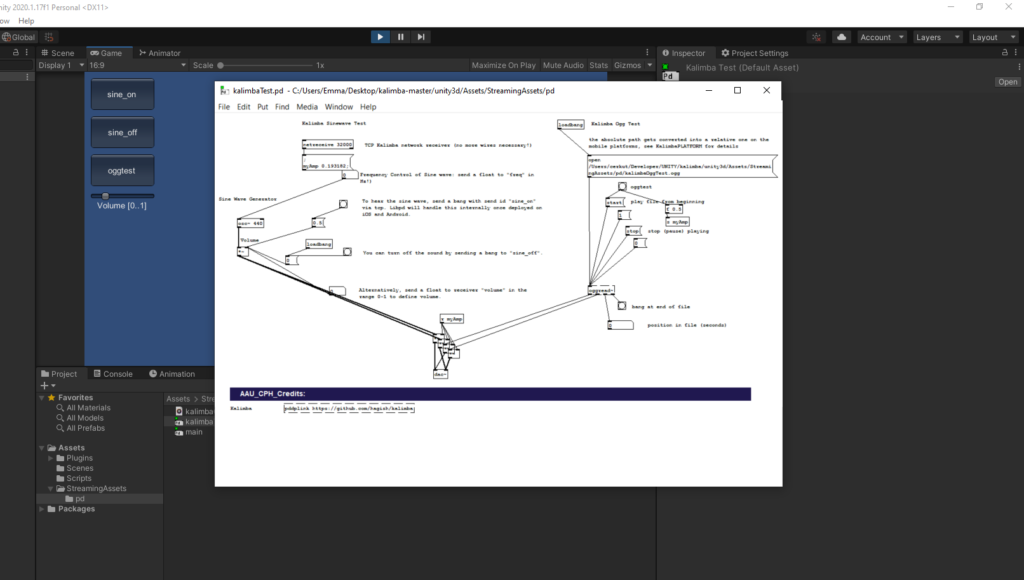

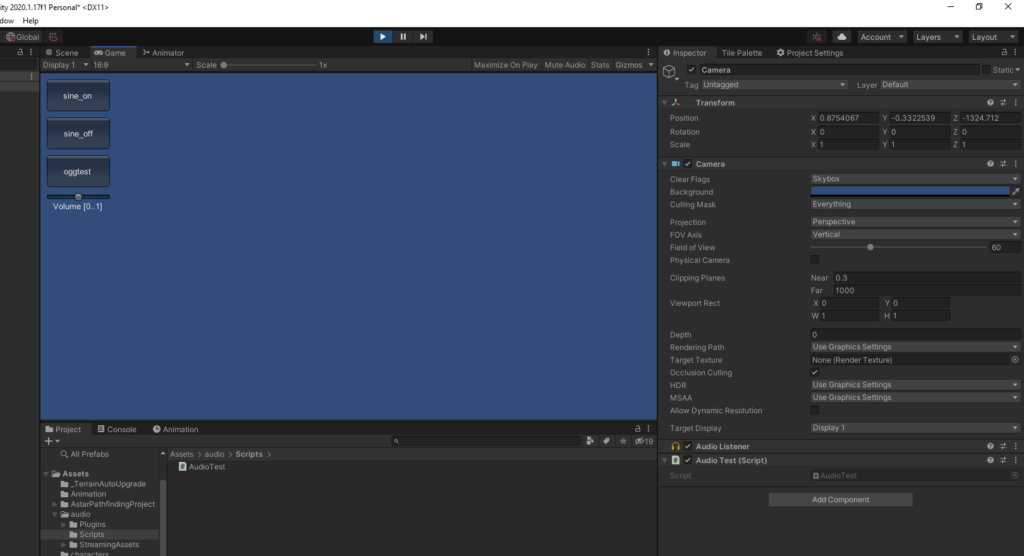

In the past month I have added two important new programs to my project. Once the video game had mostly been completed, the next step was to connect Unity and Pure Data so that the game data could be used to direct the generative soundtrack. To do this I used the program Kalimba. The two main ways to connect the two are Kalimba, which is mainly for mobile development, and Enzien Audio’s Heavy. Since Kalimba is mostly for mobile games, it has the drawback that the Pure Data patch has to be open while playing the game to work. Heavy does not have this issue, but unfortunately does not support every Pure Data object, only the more common ones. Since the neural network is fairly complicated, using Kalimba made more sense, especially in the research phase of this project. Below are pictures of a sample Pure Data patch being connected to Unity using Kalimba. When the sine_on button is pressed within Unity, a tone generated from the Pure Data patch is played.

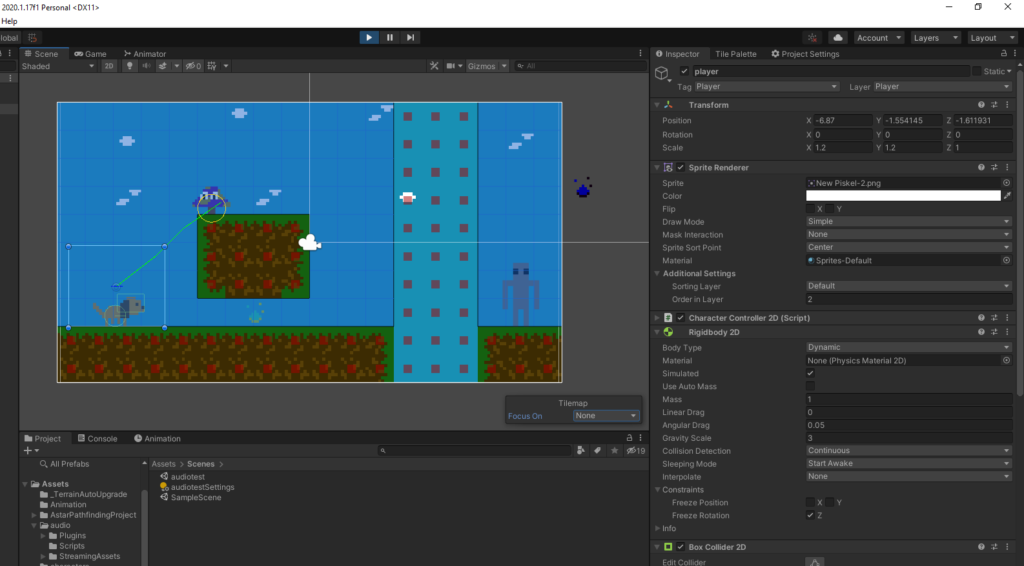

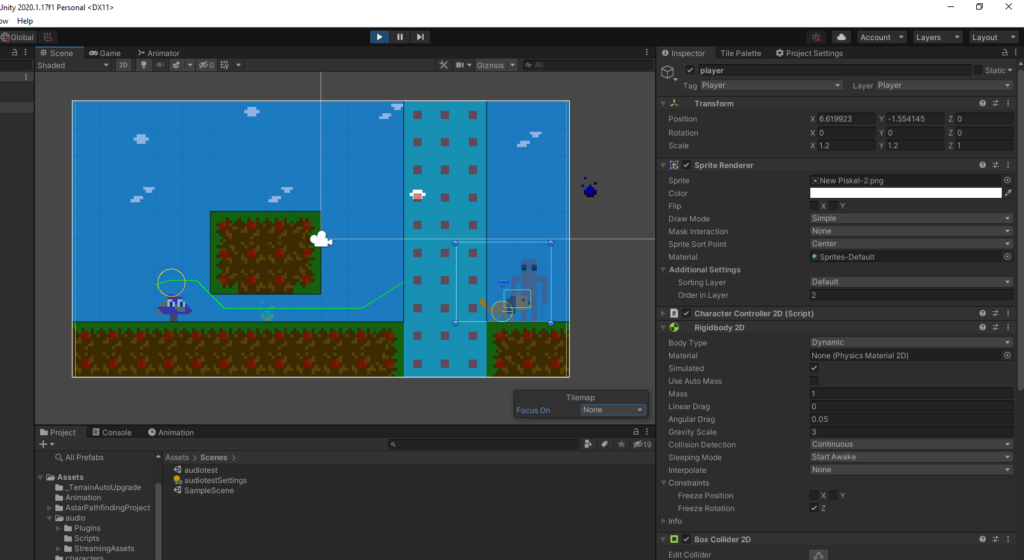

The other important addition is the use of the A* pathfinding algorithm. This algorithm, when added to enemies in the game, will cause them to find the shortest path to the player character and move towards them. This is the other major use of AI in this project, other than the neural network based soundtrack. Below are two screenshots of the algorithm in action, showing the path the enemy will take towards the player as a lime green line. The red squares are points on the grid that the enemy cannot move to, like obstacles, the ground, or pits.

The neural network is also mostly finished now, and follows a four layer structure. First the initial game inputs (where on map, progress in game, current player action) go into the first neuron layer, which controls the general mood of the current music. The constraints on the piece derived from the chosen mood are sent into the second layer, alongside a few new game inputs (puzzle and combat stats and upgrades). This layer is the rhythm of the music: the time signature, which rhythm instruments are used, the tempo, etc. This provides further constraints which are sent into the third layer alongside more game inputs (% map explored, side vs main quest progress). The third layer is the harmony, which generally means the chords used and how quickly they change. Finally, this layer sends its constraints into the final layer, which takes no new game input, and generates the main melody of the piece. This is then outputted as the music the player hears while in the game. I plan to create an album of example music generated by playing the game in different ways, to hear the variance in music composition between playthroughs.