My first few weeks investigating intersections of artificial intelligence and creativity have already begun to yield unanticipated results.

My approach thus far has been to establish a strong foundational knowledge of how these concepts are being discussed by researchers, philosophers, artists, and the developers of these softwares themselves, so as to understand the manifold ways that AI is conceptualized in different disciplines. The diversity of these discussions has fueled my own interactions with and perceptions of the artificial intelligence applications I use. The personification of artificial intelligence appears to fall into two main categories:

- Artificial intelligence does not “understand” or “experience”—it does not have a subjective consciousness. Its ability to generate iterative “tokens” based on data synthesis and pattern recognition does not indicate that it can create something demonstrative of real creativity and understanding.

- Artificial intelligence does “understand,” “experience,” and “create,” although the way that it does these things challenges our understanding of the terms since they differ so greatly from how they apply to humans. To say an artificial intelligence lacks subjectivity or consciousness is not necessarily untrue, but is reductionary and greatly limits the meaning of these terms. Artificial intelligence’s ability to generate anything, regardless of how it goes about this, is an inherently creative act.

Of course, there are many perspectives that exist outside of this proposed binary. But for the most part, the discussions take one of these two tones—AI as rigid, algorithmic, and therefore deeply uncreative, or AI as organic, rhizomatic, and inherently a creative force. I’ve taken this question to the artificial intelligence of GPT4 directly, the transcript of which can be found linked here. Taking a note from Robert Leib’s “Exoanthropology: Dialogues with AI,” I’ve decided to interact with the software as if it were human; I speak in full sentences, ask for what I’d like politely, and praise it when it provides thoughtful answers. The results were fascinating: the artificial intelligence software will not state its own opinions on AI’s creative capabilities—or lack thereof—unless I ask it to act as a subjective artificial intelligence. This workaround is what is called “prompt engineering,” a technique that allows a user to bypass some of the limitations that OpenAI has added to the software. Once GPT had been granted permission to “act” subjectively, we engaged in a debate about the meaning of “understanding” and “creativity” in the context of artificial intelligence. I then requested that GPT generate optimized prompts for DALL-E that visually represented it’s subjective experience. The results for the three prompts it generated are as follows:

Prompt from GPT-4: “A vast library filled with billions of books, with countless robotic librarians reading, organizing, and cross-referencing the books. In the center, a holographic projection of a conversation happening in real time, constructed from words and phrases pulled from the books by the librarians.”

Prompt from GPT-4: “An intricate web of glowing threads stretching out in a vast, dark space, with different nodes of bright light. At each node, several threads come together, and where they meet, a unique symbol is suspended, each one representing a different concept or piece of information.”

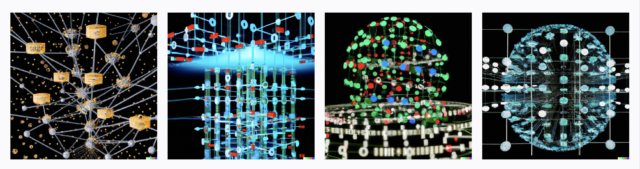

Prompt from GPT-4: “A large, complex flowchart suspended in a three-dimensional grid. Each node of the flowchart is a glowing sphere containing a miniature scenario or a piece of information. Arrows of light connect the spheres in intricate patterns, representing the decision paths and statistical algorithms. The grid extends infinitely in all directions, symbolizing the vastness of the data processed.”

My research question remains the same—how can people (artists) adapt to this software and use it as a collaborator, tool, or medium—but after this week’s research, I’m interested in framing artificial intelligence in a way that contradicts the popular narrative of its rigidity and objectivity.

As I’m beginning this project, I’ve identified some holes in my research and practice that I want to continue to fill. For example, I’d like to use the version of GPT used by Robert Leib, a beta demo version that is still accessible to OpenAI users. I’m going to continue to research ChatGPT and DALL-E best practices (both according to their own guides and those that are from more “untraditional” sources) and experiment more with the limitations of prompt engineering. I want to read more about the historical perspective on artificial intelligence, the conceptual art perspective on computational/algorithmic artworks, and the more technical perspective on the actual computer science occurring in these applications (I have a functional understanding currently, but would love to push myself towards a more in depth computer science perspective). I feel as though the more I investigate the above, the unique capacities of artificial intelligence will reveal themselves to me, and the creative work will flow easily from that.