My project focuses on using neural networks to create a unique generative soundtrack for a video game, based on each users interaction. While planning the video game I will use for my project, I decided the mechanics and flow of the game should feel familiar. It will follow a very popular platformer style of movement, and will include some puzzles and some combat. I also decided there will be two main ways to direct the soundtrack as you play. The first is a direct mapping. The game is played by switching between three characters, each of which have a special ability or mechanic. These special abilities will be directly mapped to sound. For example, one mechanic is the ability to draw new platforms. (I am still in the planning process for much of the game, so more specifics on how that mechanic will work is to come). When the player draws a new platform, the movement of their mouse will then directly translate to the current melody of the soundtrack. The other type of mapping is inherently indirect. The game allows you to focus on combat or puzzles, depending on your preference. As you do more puzzle based side missions the puzzles will become slightly harder and more prominent, and if they are avoided will decrease in difficulty. The same is true of the combat in the game. Within the neural network that is directing the game, there will be a layer devoted to altering the inputs based on whether the player is focused on puzzles or focused on combat. A lot of game play must happen before the system can establish a player as one or the other, and altering one layer in the network will not be audible to the user as a specific melody or rhythm, but instead as an overall change to the soundtrack, like speed of the melody / time signature / dynamics.

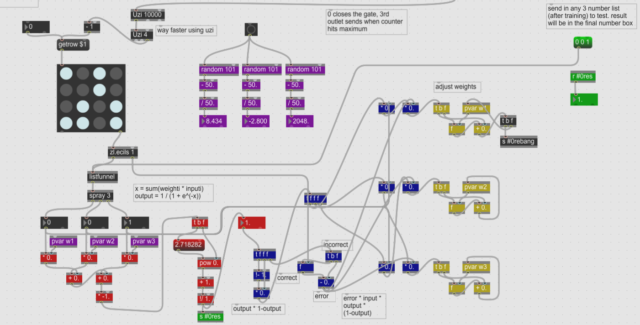

I have built a very simple neuron in Max/MSP, which is another musical programming language similar to Pure Data. I plan to use Pure Data for the actual game since it is lightweight enough to run within Unity, which Max/MSP is not. An image of this initial neuron is below. Since it is only one neuron, no layers are formed yet.

This neuron takes in training data consisting of 4 inputs. This can be seen in the large square with sixteen dots that are either light blue or gray. Blue means “on” or 1 and gray means “off” or 0. Therefore this training data can also be read as 1010, 0010, 0101, 1101. Essentially this data tells the system that 101 = 0, 001 = 0, 010 = 1, and 110 = 1. The system does not know the rule that created this data, and must try to figure out this rule from the data alone. When a new input is put in, say 111, the system will return either a 1 or 0 based on its training.

Below are two images of Pure Data, one of which shows a simple synth which can change one sine wave into a multitude of different timbres using additive synthesis. The next shows a simple step sequencer in 5/4, which plays a certain sample every beat on a loop. These are the types of musical instruments that will be directed by the neuron I build. The inputs, instead of being three numbers, will be player movements or decisions, and the output will be similar to the output of the musical instruments shown in Pure Data. I hope to continue to build up this neuron into a network of neurons, and to finish planning the game in the coming weeks, so that I can start putting all the pieces together and experimenting with them in tandon.